Dataflow

What is Dataflow?

Dataflow is a fully managed stream and batch processing service who allow you to create data pipelines that ingest, transform, and analyze data in real time or batch mode.

Dataflow is based on Apache Beam, an open-source, unified model for defining both batch and streaming data-parallel processing pipelines.

With Apache Beam, you can write your pipeline using a language-specific SDK (Java, Python, Go)

Advantages

- Fully managed: Google Cloud manages the infrastructure, so you can focus on your data and analysis.

- Scalable: You can easily scale your pipelines up and down.

- Portable: You can write your pipeline in multiple languages, and u can run it on multiple execution engines (Apache Flink, Apache Spark, etc.)

- Flexible: You can create simple or complex pipelines.

- Observability: You can monitor your pipeline with Dataflow monitoring interface

Use cases

- Moving data: Dataflow can be used to move data from one place to another.

- Real-time analytics: Dataflow can be used to analyze data in real time.

- ETL: Dataflow can be used to extract, transform, and load data.

- ...

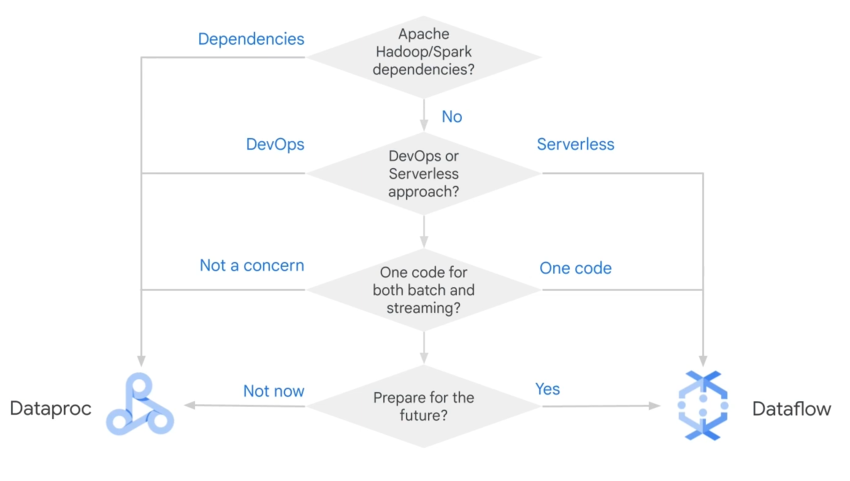

Dataflow or Dataproc ?